Daniel Weber, Max Schenke and Oliver Wallscheid from the LEA department published an article on improved reference tracking using reinforcement learning (RL) for power electronic systems in the IEEE Access journal. It could be proven that the introduced conceptual extension for arbitrary continuous control set RL algorithms significantly improves their steady-state control accuracy as it has been empirically demonstrated for both electric power grid and electric drive applications.

Abstract:

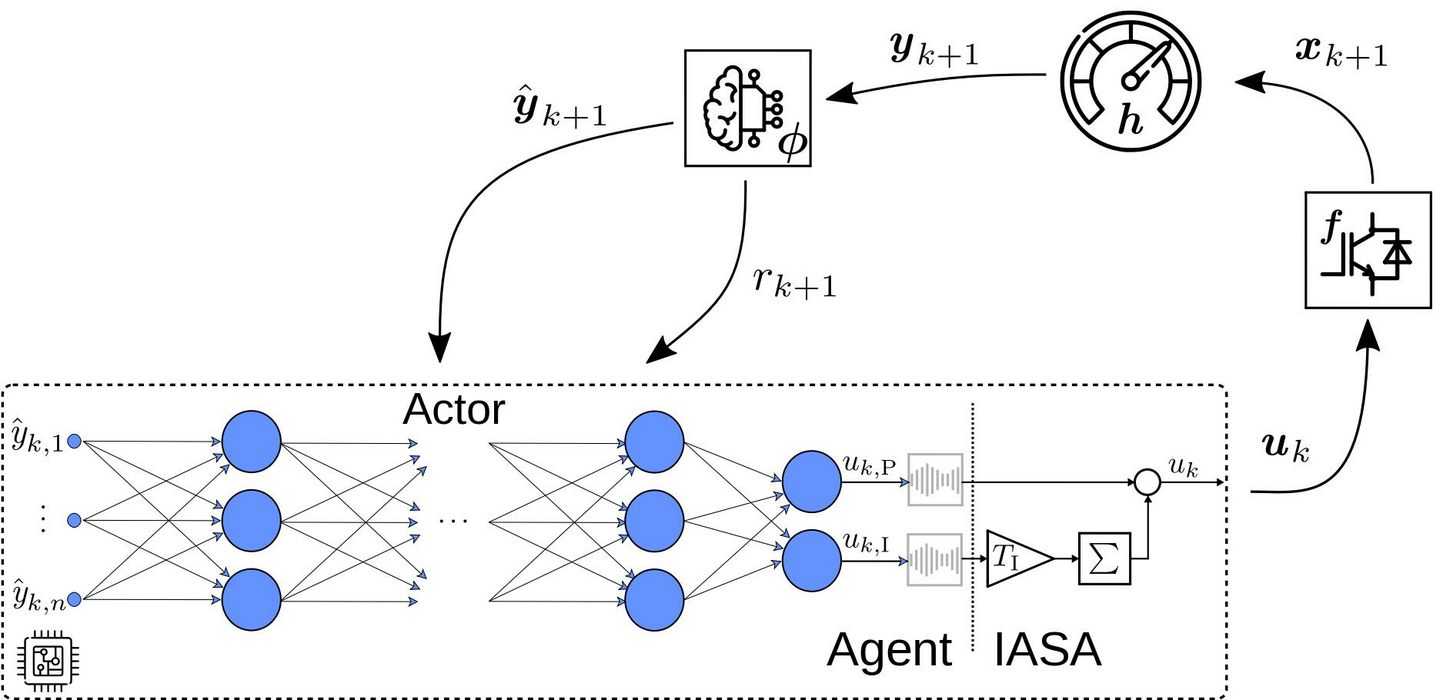

Data-driven approaches like reinforcement learning (RL) allow a model-free, self-adaptive controller design that enables a fast and largely automatic controller development process with minimum human effort. While it was already shown in various power electronic applications that the transient control behavior for complex systems can be sufficiently handled by RL, the challenge of non-vanishing steady-state control errors remains, which arises from the usage of control policy approximations and finite training times. This is a crucial problem in power electronic applications which require steady-state control accuracy, e.g., voltage control of grid-forming inverters or accurate current control in motor drives. To overcome this issue, an integral action state augmentation for RL controllers is introduced that mimics an integrating feedback and does not require any expert knowledge, leaving the approach model free. Therefore, the RL controller learns how to suppress steady-state control deviations more effectively. The benefit of the developed method both for reference tracking and disturbance rejection is validated for two voltage source inverter control tasks targeting islanded microgrid as well as traction drive applications. In comparison to a standard RL setup, the suggested extension allows to reduce the steady-state error by up to 52% within the considered validation scenarios.

Published in: IEEE Access ( Volume: 11)

Page(s): 76524 - 76536

Date of Publication: 20 July 2023

Electronic ISSN: 2169-3536

DOI: 10.1109/ACCESS.2023.3297274

Publisher: IEEE