Vorlesungsinhalte

Information theory provides the mathematical foundation of modern communication, data compression, and statistical inference. Originally developed by Claude Shannon, it quantifies information, uncertainty, and the fundamental limits of data transmission and storage.

This course offers a rigorous introduction to the central concepts of information theory. Students will explore entropy, mutual information, and relative entropy, and understand their operational significance in compression and communication systems. The curriculum covers both lossless and lossy source coding, channel capacity, and the fundamental limits of reliable communication over noisy channels.

Classical results, such as Shannon’s source coding theorem and the channel coding theorem, are derived from first principles. Strong emphasis is placed on providing an intuitive understanding of mathematical concepts and their practical interpretation in real-world systems. Beyond communication, the course highlights vital connections to machine learning, statistics, signal processing, and networked systems.

Course Contents

- Entropy, Relative Entropy, and Mutual Information: Definitions, properties, and the chain rule

- Asymptotic Equipartition Property (AEP): Typical sequences and data compression

- Source Coding: Lossless compression, Huffman coding, and Shannon’s source coding theorem

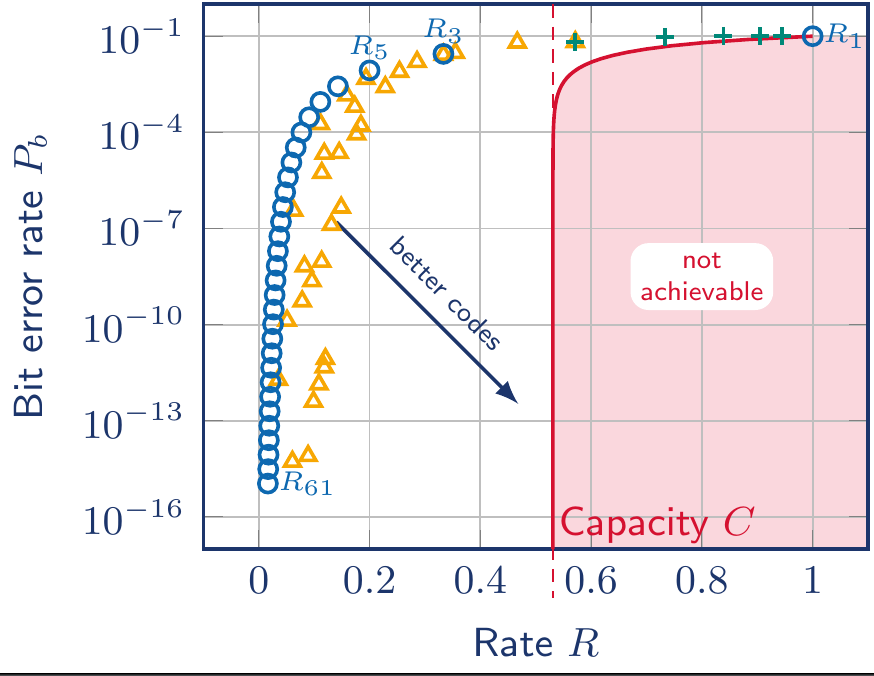

- Channel Capacity: Symmetric channels, the Channel Coding Theorem, and Shannons's limit

- Differential Entropy: Information measures for continuous random variables

- The Gaussian Channel: Capacity of power-constrained channels and the Shannon-Hartley theorem

- Rate-Distortion Theory: Fundamental limits of lossy data compression

- Information Theory and Statistics: Method of types, Large Deviation Theory, and Hypothesis Testing

- Network Information Theory: Introduction to multiple access and broadcast channelsCourse Syllabus / Contents